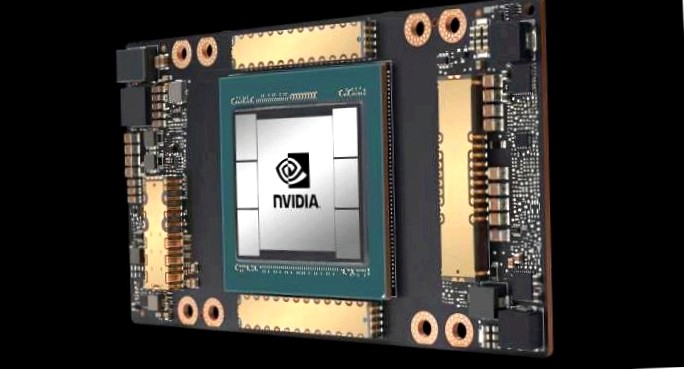

1.8 Exaflops ki computing power: teslas supercomputer for autopilot

Tesla shows the latest assessment of its own supercomputer, which is responsible for the training of the autopilot of all tesla vehicles. 720 nodes are used, each with eight a100 gpu accelerators from nvidia in the version with 80 gb hbm2e batch stores, ie 5760 gpus in total.

If you use all integrated tensor cores for ki calculation, there is a fp16 computing power of around 1.8 exaflops. The shader cores create just under 56 fp64 petaflops. Dear tesla include the own supercomputer in the top500 list of the world’s fastest supercomputers, he currently came under the first ten systems. Private company systems have not been found there, but not.