1.8 Exaflops ki computing power: teslas supercomputer for autopilot

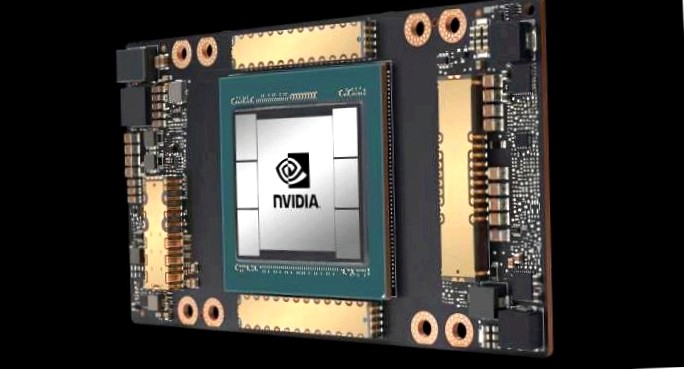

Tesla shows the latest assessment of its own supercomputer, which is responsible for the training of the autopilot of all tesla vehicles. 720 nodes are used, each with eight a100 gpu accelerators from nvidia in the version with 80 gb hbm2e batch stores, ie 5760 gpus in total.

If you use all integrated tensor cores for ki calculation, there is a fp16 computing power of around 1.8 exaflops. The shader cores create just under 56 fp64 petaflops. Dear tesla include the own supercomputer in the top500 list of the world’s fastest supercomputers, he currently came under the first ten systems. Private company systems have not been found there, but not.

Three clusters

Teslas head of the ki team andrej karpathy has revealed the details of the supercomputer on the conference on computer vision and pattern recognition (cvpr) 2021 (youtube recording with timestamp). The described system is the latest three clusters – which is available for total crime performance.

Pci express ssds with a capacity of 10 pbyte accompany the a100 gpu accelerator. The interconnect for composite all nodes processed 640 terabits per second. A gross part of the training model fits in the a total of almost 461 tb at particularly fast hbm2e-ram on the a100 cards.

Details of the built-in processors did not betray karpathy. Amd’s epyc cpus or amperes altra-arm models were obvious, as both with pci express 4.0 can be done, as well as the a100 cards. For intel’s ice-lake-sp processors, it was allowed to become scarce.

1.5 petabytes training data

Tesla uses around 1.5 petabyte of data for the training of the autopilot for data derived from tesla vehicles in real use. Each car records eight video streams with a resolution of 1280 × 960 pixels and 36 images per second. The videos of one million vehicles with a long 10 seconds each form the training base.

The neuronal network is trained in the so-called shadow model: the underested ki runs with real test drives, but controls the car actively. Errors are evaluated and tensed afterwards.